Like some wayward adolescent child, my attention is first drawn to Tetsuo’s continued abysmal performance.

The new configuration set took effect April 6th:

It did a slight dip then went up, but this is largely market beta, as seen by the new dip around April 27th and subtracting pct_mag from SPY movements. There’s a little alpha signal in there if you look at it with a microscope, but not enough to ever call profitable, because as soon as the market momentum drags it down in a month or a week or whatever, it’ll lose all those gains.

I did some retros on it. Directional accuracy is a piss-poor 58%. Magnitude is so off is may as well be random. This is not performant. When I noticed I was ready to pull the plug on the whole project. It’s expensive to host, it’s not making money, and I’m running out of resources until I have new revenue streams established. The money just isn’t there.

So I dug a little deeper, hoping to learn something that will improve future versions, thinking — maybe I can host it on a local server later, and, much to my chagrin, it was not loading the feature configurations for SIG, which does directional forecasting for each symbol. MAG is useless if DA is low from SIG.

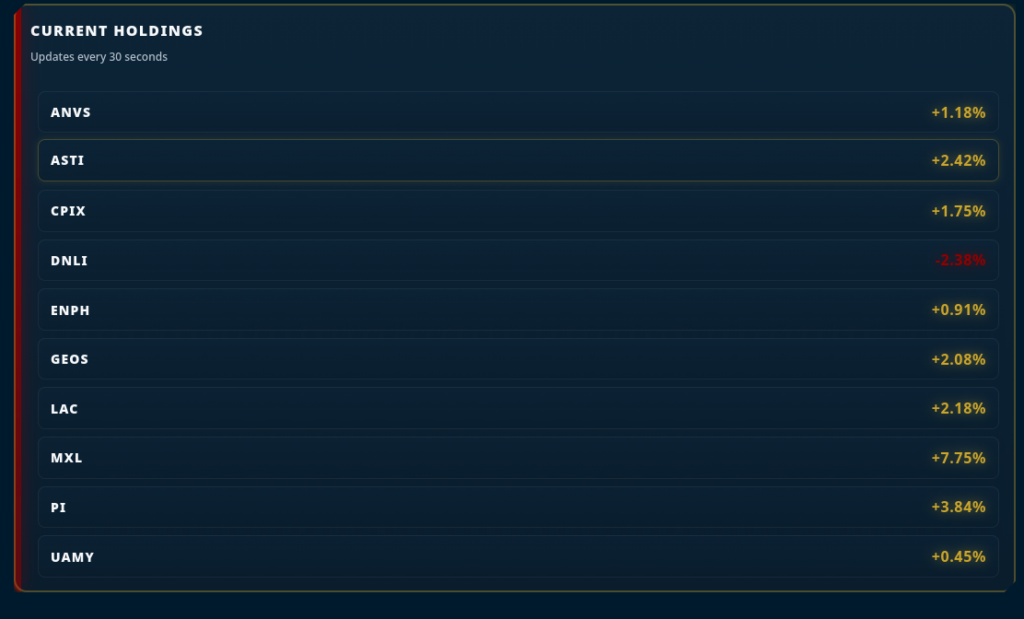

So, I fixed that and re-ran last night’s forecasts before trades. Lo, and behold, immediate, substantial correction:

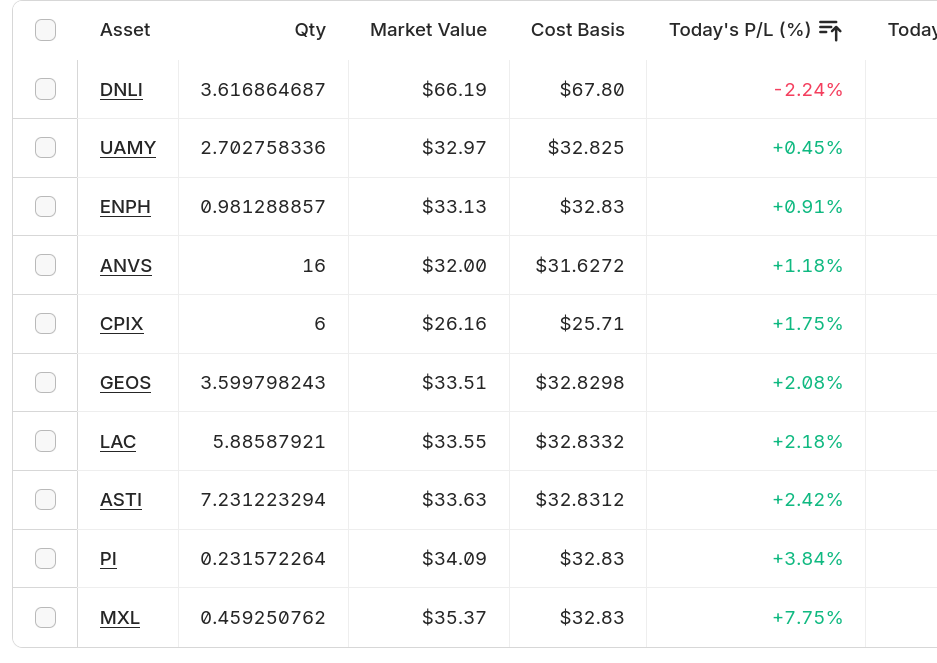

Individuals are even more promising:

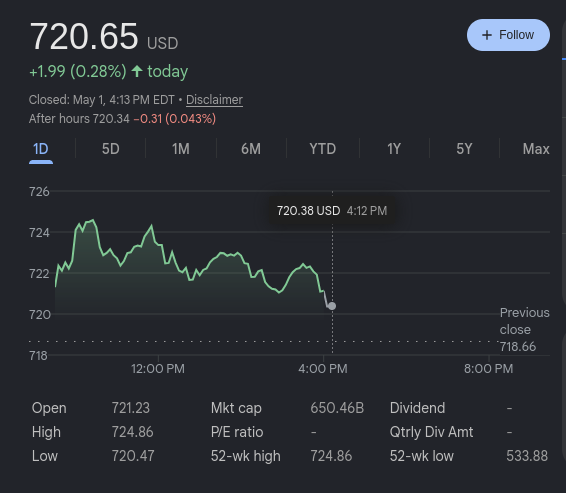

And on DSH:

9 out of 10. And, SPY is down today, so that’s an indicator that it’s alpha signal:

So, that’s barely enough wet promises to keep it going. For now.

What a stupid fucking mistake. I did a retro looking back through my notes to see how this wasn’t caught before deploy, I see exactly what happened. I was exhausted at my job. I was in a rush to get it deployed just to have something up, and then I never came back and cleaned that part of it. Tetsuo/SOL MK-7 was a third gen rush job on two prior generations of rush jobs. I’ve internalized some of the same dumb practices I’ve been telling devs they’re wrong to do about for 10 years. This was stupid.

In any case, I’ve got to continue cutting costs anyway. It costs about 50/month to host the shit. 99/mo for the data access. Then the overhead time adjusting it and configuring it. Those are cut-or-restructure cues for a tight budget.

So, I’ll be building a Tetsuo SOL MK-8 that’s hosted locally after resolving an emergent vulnerability impacting all modern Linux systems which I am closing right now.

Luckily for me, I’ve already dramatically reduced my hosting footprint so it’s just a few hosts.

So, hosted locally, I’ll need some kind of a dynamic DNS solution. I host the DNS for that domain, so, maybe a python tool that manages some dns records in IPA using a service account called from a cron. Doesn’t need to be extravagant it just needs to ensure a set of records points at an IP and then a little router configuration.

UIP – the inventory component. This is good how it is. TDM is acceptable. SAP is good how it is though standalone, sentiment only has about a 57% DA impact. It really isn’t outperforming pure data analytics but seems to be serving some synergistic quality to the whole system. EXP needs rewritten from the ground up. SIG needs a full re-evaluation. MAG needs rewritten from the ground up, with configuration options for which forecasting methods to use so I can have versioned macros. WIN needs the same. BUY is fine as it is, though, it could be more coherent in tracking daily positions to get better/easier metrics from the whole system from for DSH to report. BAL is simple and fine how it is. This looks like something I just pick at in the background until I have a full plan.

State management is abysmal through every component. This was a drop-in after the fact when I realized there were cross-component state dependencies. It needs rebuilt from the ground up in a new version.

Flow control needs centralized.

I’m thinking MVC in flask using an APScheduler for batching. Moving to persistent services that wait for trigger events and report states to a central orchestrative service. It would certainly improve whole-system coherence without losing the benefits of service separation. That would introduce security considerations to the view layer, as, currently it’s all static output behind an HTTPD service, so, to make it interactive at that level I’d need an auth layer, user permissions, identity management integration. Sessions.

It’s not a damning amount of work, but it is overhead. Still, maintenance operations on it would be alot smoother, and, it would allow feature-set configuration in MAG and SIG to be performed in between other tasks incrementally, which, is a critical missing feature right now. If introduced, that would mean it’s always cooking something, but, it would eliminate the need altogether for these marathon feature configuration operations that take about 5 hours for SIG and about 40 hours for MAG. If it did a couple hundred a day then every time the configuration window passes it’s on a whole new set so it would move the whole system to self-maintaining. Can’t do that in the current model. Not without a rewrite and the orchestration would be rube-goldbergy without that approach. I think I’m going to have to do it just for that benefit alone.

Another benefit is performance would go way up. The local server is largely unoccupied and has alot more compute power than the linode it’s currently hosted on. The tradeoff: Even with the UPS, a good rainstorm that knocks the power out, and, I’m down.

Now that I think about it, TDM when it’s running (and UIP for that matter) make the current dataset unavailable while they’re cooking and this create whole-system state dependencies. This could be done cleaner as it can interrupt ongoing operations when I’m performing maintenance.

It will need a dedicated orchestration component.

As I type this, the resident dog, Luigi, dropped his tennis ball and let out a bark. It’s almost like he knows it’s his birthday. He’s a fat black lab that I have spoiled now since he was a puppy. He’s 8 years old as of today. He was 8 weeks old when I brought him home in July of 2018, and could fit in a cereal box. Now, he’s 8 years old and weighs about a hundred pounds, and is a very good –albeit chonky– boy. So we threw the tennis ball around until he was too tired to bring it back.

It is also my 41st birthday in two days.

Anyway, in terms of finances, I’m not at the tipping point yet, but, it’s on the horizon. I have until no later than September to find new revenue streams. Like Agathocles of Syracuse, the ships are gone, and there is only Carthage or failure ahead. If I fail to meet this deadline, there is no tetsuo, there is no SILO GROUP, and there is no house for me or for Luigi to live in. And there is no support network to fall back on. It’s as serious as it gets. Quality of living has already degraded to make this all work as long as it has.

I guess I better get more serious about it.

As part of that, I designed, built and rolled out a new system I unimaginatively called “Campaigner”, at campaigner.silogroup.org that allows SILO GROUP users to build job general campaigns, suitable for searches for work and career networking to that aim. It’s actually proven already to be quite useful at discovering opportunities, so, it’s a numbers game at this point. Or a time game. Time will tell.

Another tool that might be useful is ATS keyword optimization. So many of those recruiting systems are using shitty AI products to scan for keywords instead of looking at the candidates applying, which, makes sense because the job market in that industry is in a crisis right now and every role has hundreds of applicants. Most of the resumes don’t ever get read even after the keyword filters. So I’m considering building a system that takes everything I’ve ever worked on at every place I’ve worked on it, and then takes a job description and aligns the appropriate ATS keywords from my history to the job description and spits out a tailored resume. This would allow me to rapidly apply at many places with tailorized resumes optimized for the HR filtering systems they’ll be processed by.

I may pick up a containerization cert. Alot of places don’t understand that architects at my level just view container orchestration as another tool on the OS. I know more about tools I’ve never touched on those systems than people whose whole career involves working on them daily, and that’s very confusing to people who lack the intellectual capabilities of design abstraction and system deconstruction. When you know the kernel capabilities and can design orchestration framework toolchains, tools that use those features and designs fall into place. People that can’t do that fall back on product expertise, and the people that generally hire them don’t know the difference.

Anyway, I’m not done, but, I’m certainly taking hits. As a strong believer in karma, though, I know what’s on the other side of Carthage. They just have to open one door.